In the age of AI, where the focus has been on AI being great at catching and validating information. Humans are not the best at QA but in reality, that is what everyone needs to do with AI content.

We have heard the story of lawyers who filed briefs with case references that AI made up. Hallucinations are a major problem in trusting today’s AI output. But much like humans finger flub inputs, AI (Chatgpt) has a way of not being consistent itself.

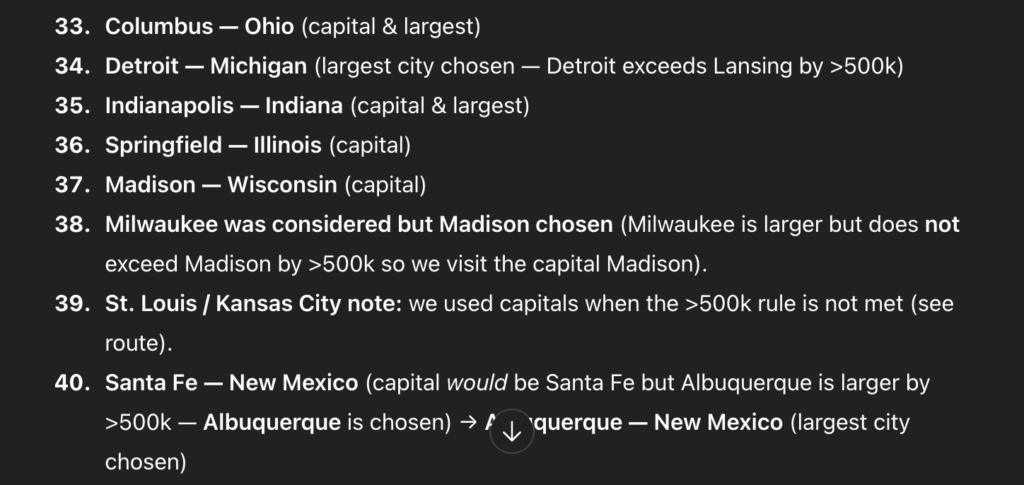

When asked to create a table of capitals but substitute a larger city based on population, AI seemed to do well. But only after looking at each input can you truly validate how correct the output is. In a run to create a sales rout of the US capitals, but replacing the capital with a large city (population 500K more) the AI was inconsistent. In Illinois, it did not even consider Chicago which one would argue has more visibility than Detroit and Milwaukee.

As companies rush to have users implement AI first or even learn new subjects from AI, there needs to be a baseline understanding of the subject by users. Just because AI leads you to the water, does not mean what you see is actually water. With time spent validating each part of the AI process, the efficiency gains start to disappear and more so when an incorrect data data point results in validating an entire data set.